ASP.NET Caching - Great for performance, tough on testing

To help improve the performance of our company web site (www.GibraltarSoftware.com) under load we’ve been working with the output caching infrastructure capabilities of ASP.NET. On the plus side, when it works it really does make a difference - while every page on the site is actively generated so we can take advantage of master pages, membership, and a few other capabilities the truth is that the vast majority of the content is static.

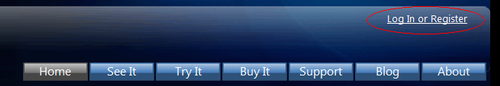

The login status has to be generated on each page hit

Fortunately, with the use of the Donut Caching techniques discussed by Scott Guthrie we were able to make most pages work just great. This let us have the upper right account information be generated on every request without regenerating the page. Frankly, there’s a lot of room for improvement in how ASP.NET does this (you fall way down to manual HTML generation when you do this, but at least you can do it) but it works just fine.

However, after deploying it we started getting periodic 404 errors on the site that didn’t make a lot of sense. To help us troubleshoot it, we’ve added a meta header to every page so we could identify the generation time. That’s a practice I’d recommend to anyone so you can separate cached pages from fresh pages. Digging into the 404 errors, they related to URL’s that we use for landing pages for advertising.

Background on the Problem

Whenever we set up an external ad campaign we create dedicated entry URL’s just for that campaign that we direct to the right page on our content. This lets us tweak where ads go and try permutations without having to coordinate with the site where the ads are placed. This has been invaluable in helping us test combinations of ads and text to get the most effective combinations.

We’ve been using the built in URL Mapping capabilities of ASP.NET to let us direct requests for one url:

http://www.GibraltarSoftware.com/ads/w1a.aspx

to the real page that we want to display, like:

http://www.GibraltarSoftware.com/See/Default.aspx

The trick is that the target page has to make sure it renders all of its links based on the URL the client is using, which is supported by making sure every link on the page is being run through the ASP.NET Resolve Client URL method. Easy enough:

<a href="~/See/What_Is_Gibraltar.aspx" runat="server" enableviewstate="false">What Is Gibraltar?</a>

This will correctly get rendered depending on the current URL the client has so it is a minimal relative URL.

Back to the Problem

Looking at the 404 errors, it was clear that something was interfering with this resolution. The requested URL looked like this:

http://www.GibraltarSoftware.com/ads/What_Is_Gibraltar.aspx

Apparently, a version of the page that was resolved based on the target URL of the ad was being served instead of the actual URL.

We started experimenting: If we reset the server (causing the cache to be dropped) and hit the ad Url first, view source showed this URL:

<a href="../See/What_Is_Gibraltar.aspx">What Is Gibraltar?</a>

In this case everything works correctly because it turns out that a relative URL resolved from that location works correctly with the destination URL.

But, if the destination link was hit first then view source showed this URL:

<a href="What_Is_Gibraltar.aspx">What Is Gibraltar?</a>

Carefully checking the timestamps demonstrated that we were getting the same cached page for both URLs. It appears the ASP.NET cache engine is looking up the cached page based on the post-URL mapping value instead of the pre-URL mapping value. Some quality time with Google later we weren’t able to find any documentation that this was happening, and a few cases of folks reporting similar problems. Unfortunately, there’s no apparent way to directly have the output cache vary by client URL because, well - it thinks it always does that.

The Hack

In the end, we got around this with a little trick: In the URL Mappings we added an irrelevant query parameter. Since we had the output cache policy set to vary by query parameter this would force the output cache to treat the URL as being distinct. I’m not thrilled with this answer because it relies on a non-obvious convention to get around a code problem that, if done wrong, can easily be missed in testing.

Original web.config section:

<urlMappings>

<add url="~/Ads/W1a.aspx" mappedUrl="~/See/Default.aspx"/>

<add url="~/Ads/W1b.aspx" mappedUrl="~/See/Default.aspx"/>

<add url="~/Ads/W2a.aspx" mappedUrl="~/See/Default.aspx"/>

<add url="~/Ads/W2b.aspx" mappedUrl="~/See/Default.aspx"/>

</urlMappings>

Hacked web.config section:

<urlMappings>

<add url="~/Ads/W1a.aspx" mappedUrl="~/See/Default.aspx?mappedUrl=true"/>

<add url="~/Ads/W1b.aspx" mappedUrl="~/See/Default.aspx?mappedUrl=true"/>

<add url="~/Ads/W2a.aspx" mappedUrl="~/See/Default.aspx?mappedUrl=true"/>

<add url="~/Ads/W2b.aspx" mappedUrl="~/See/Default.aspx?mappedUrl=true"/>

</urlMappings>

Note that it doesn’t matter what we used for the query parameter - we could have just as well put “foo=bar” gotten the same effect. The point is that since the output cache policy was set to vary by any parameter, this would cause the pages that were reached from the URL map to be cached independently of those reached through normal links on our site.

The Moral of the Story

Output caching can save you a lot of sever load and improve the all important time from the caller request to getting the page back (at which time the web browser can make parallel requests to load missing elements and compose the page). This can make a surprising difference to the web site’s apparent performance and on that basis is worth getting familiar with.

Additionally, output caching is a great safety net for when your site gets picked up by SlashDot or linked to from Coding Horror or something. That’s your moment to shine, and you just aren’t going to get there by manually generating each page.

When you set up output caching, be sure to include a generated timestamp on each page so you can verify that caching is working when it should, resetting when it should, and you are getting distinct pages when you should. Unfortunately, you can’t take anything for granted.